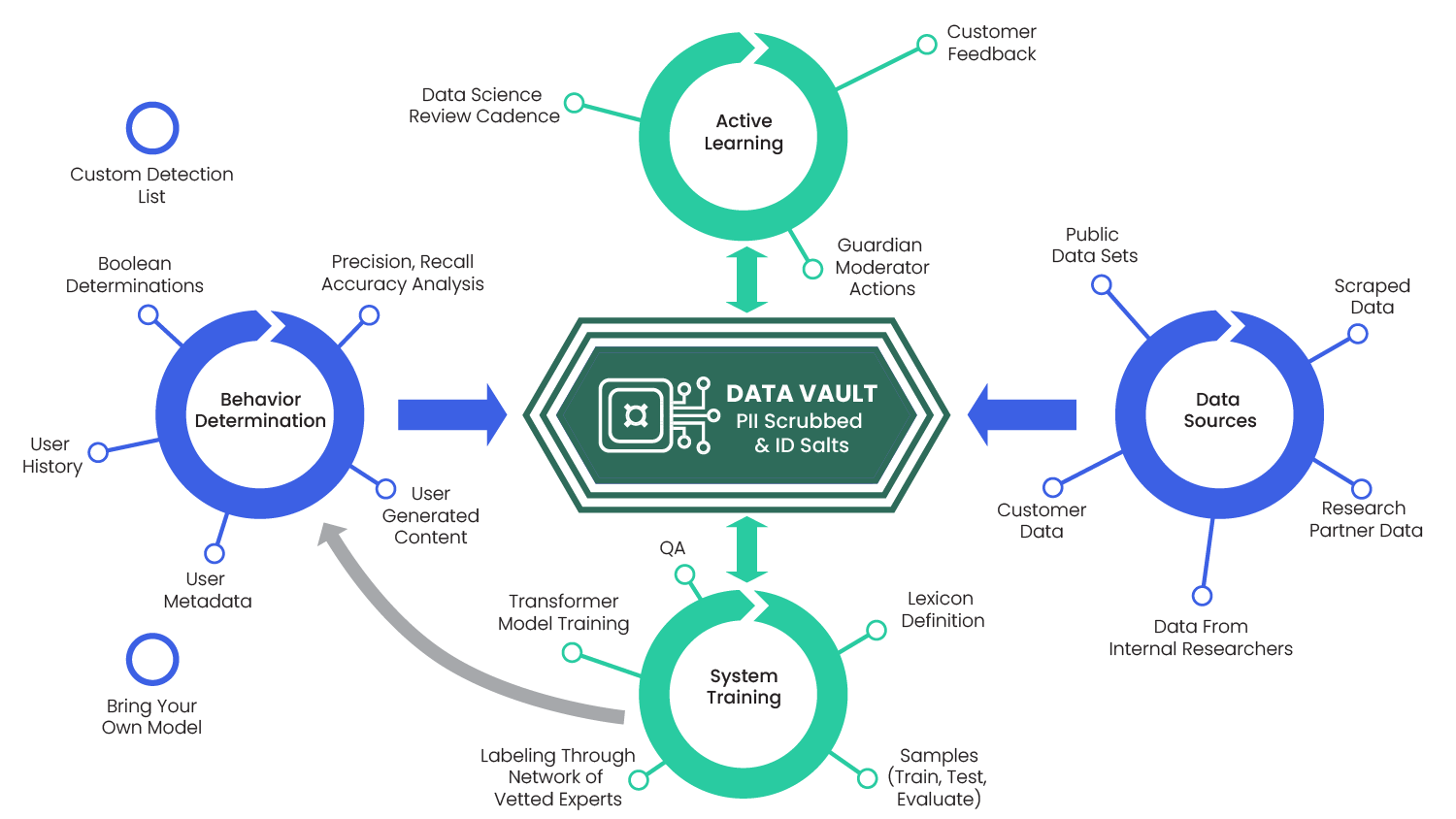

Advanced Behavior Systems are compiled from 5 interlocking subsystems:

Data Sources,

System Training,

Behavior Determination,

Active Learning

The Data Vault

Artificial Intelligence is only as good as the data going in. At Spectrum Labs, we have invested heavily in a rock-solid data operations workflow to ensure a broad and rich understanding of human behaviors on our customers’ platforms that host user-generated content.

First, we intake a variety of public data sets and, depending on the behavior to capture, we leverage scapers to get domain-specific public data. Spectrum Labs works with well-recognized researchers for specific domains to get specialized data sets, such as Safe from Online Sex Abuse and the Center on Terrorism, Extremism, and Counterterrorism.

Finally, Spectrum Labs has a Department of Research that conducts in-depth research around specific behaviors that is used as data-sources as well. Gathering and feeding these data sources into the system is an ongoing process.

To train the XLM-R models, we first define a lexicon. A lexicon is a crucial step specifying exact definitions of what constitutes the range of different behaviors we capture in text and audio.

The lexicon is the specification that is used for labeling. Large samples are taken from the data vault, then split into three different data sets:

Through an extensive network of vetted native language experts, the sample data set is labeled according to the lexicon specification. From there, the labeled training data set is used to train the transformer models while the labeled testing data set is used for quality assurance cycles.

The trained models are then used in run-time to determine behaviors at incredible speed and scale.

The behavior determination process follows this process:

The entire process is completed in under 20 milliseconds.

As per scale, our API currently processes billions of pieces of user-generated content (UGC) every day. The evaluation-labeled data set is then used to automatically perform Accuracy, Precision and Recall and Accuracy analysis.

Also worth noting: All UGC data the behavior determination cycle processes are fed into our data vault to be anonymized.

AI models get better through active learning or "human in the loop" tuning cycles.

Active learning consists of customer feedback, moderator actions (e.g. de-flagging a piece of text that was incorrectly flagged as profanity) and Spectrum Labs' data science department regularly reviewing model performance. The combination of these inputs are fed back into the data vault and the system-training cycles.

Spectrum Labs’ data vault is the world’s largest AI training data set built specifically to capture harmful and positive behaviors.

The anonymized data (PII is removed and cryptographic salts are used to hash identifiers) from the data vault feeds into the tuning of the models – with every API call and corresponding behavior determination, the data vault gets enriched and the models become better. The behavior determination data flowing into the vault becomes the flywheel.

Large language models (LLMs) have become very popular recently. However, behavior is determined by more than just the XLM-R model and data

Where Spectrum Labs’ technology and LLMs differ is that LLMs use the open internet to learn and lack domain-specific active learning cycles. That means the LLMs may learn things that are false (e.g. from Reddit) but the humans in charge of providing feedback into the learning models may not know that it’s false. This was recently seen at the launch event of Google Bard, where LLM mistakenly attributed the first photo of a planet outside our solar system to the James Webb Telescope.

In Spectrum Labs’ case, the data that models learn from is carefully sourced and curated to train our models on one specific type of behavior each. Additionally, active learning with human feedback is achieved by employing specialists in the areas of Trust & Safety and language. As a result, Spectrum Labs’ models are directed to a narrow domain and reinforcement learning is fueled by experts in that domain.